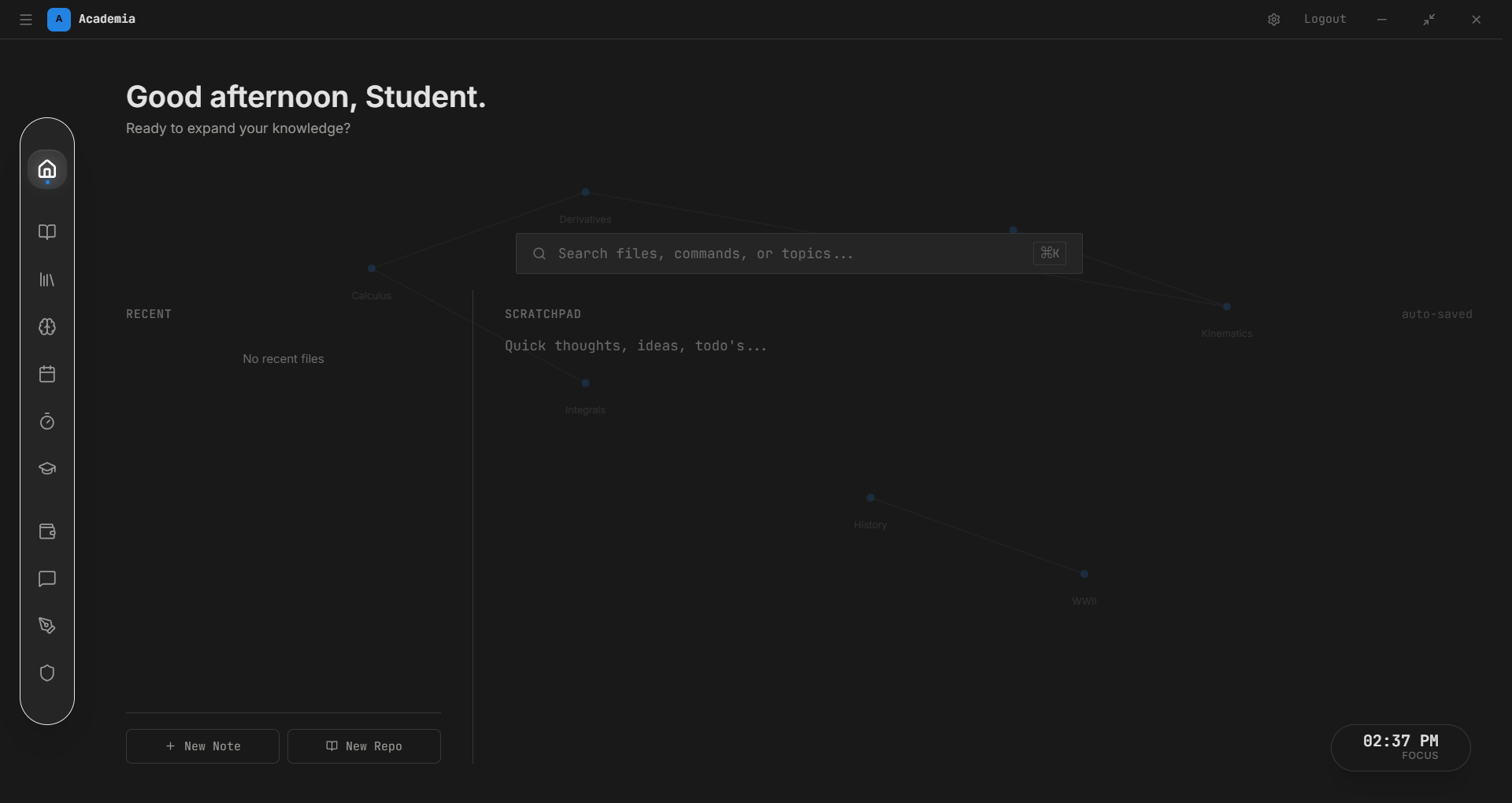

Agent Memory Governance Layer for Task-Driven LLM Agents

Mini Project

Designing a memory governance layer to control what an LLM-based agent stores, retrieves, or discards during long-running tasks. Focused on task-conditioned memory selection and prevention of context pollution.

The Problem

Long-running LLM agents accumulate context indiscriminately — old task states, irrelevant tool outputs, and contradictory facts pollute the active window, degrading both reliability and predictability.

What I'm Building

A governance layer that sits between the agent's task executor and its memory store. It intercepts read/write calls and applies relevance filters, recency scoring, and task-conditioned rules to decide what actually gets retained.

Separating short-term context (current task scratch), episodic memory (summarised past runs), and semantic memory (domain facts) into distinct stores with explicit promotion/eviction policies.

Research Direction

Targeting multi-agent systems where memory bleed between agents is a security concern. Explainability is a first-class requirement — every memory decision should be auditable.